National Security vs. Ethics: Anthropic refuses to remove Claude's safety guardrails, clashes with the U.S. Department of Defense

Anthropic refuses to withdraw Claude safety guardrails; negotiations with the Pentagon break down. Trump orders a ban and lists supply chain risks; OpenAI quickly secures a $200 million military contract.

Ethical guardrails ignite national defense crisis. Anthropic’s negotiations with the Pentagon collapse.

AI startup Anthropic’s long-term partnership with the U.S. Department of Defense (DoD) ended in February this year. The core dispute centers on Anthropic’s refusal to remove key safety guardrails from its Claude model, leading to the termination of a $200 million contract and the company’s blacklisting on national security lists. The conflict was triggered by a contract signed in July 2024, when Anthropic became the first AI lab to integrate its model into classified military networks, once seen as promising. As the Pentagon demanded unrestricted access to AI systems for “all lawful uses,” the relationship quickly soured.

Anthropic CEO Dario Amodei explicitly stated in a press release that the company maintains two non-negotiable bottom lines:

- Prohibit use of AI in fully autonomous weapons systems;

- Prohibit large-scale domestic surveillance of U.S. citizens.

Amodei emphasized that powerful AI can piece together seemingly harmless scattered data into a complete picture of individuals’ lives. Using such technology for mass surveillance would severely conflict with democratic values. Additionally, he argued that current AI technology is not yet stable enough to make lethal decisions without human oversight, and deploying it prematurely could endanger soldiers and civilians on the front lines.

Image source: Fortune Dario Amodei, CEO of Anthropic

Faced with Anthropic’s stance, the Department of Defense took a hard line. Pentagon spokesperson Sean Parnell claimed the military has no intention of using AI for illegal surveillance or developing “killer robots,” but emphasized that vendors must commit to “all lawful uses.”

The deadlock intensified in the last week of February. Last Wednesday (2/25), the Pentagon issued an ultimatum, demanding that Anthropic accept a new, vague legal contract by 5:01 PM on Friday (2/27). An Anthropic spokesperson said this so-called compromise contained legal traps allowing the military to ignore safety guardrails at will, leading the company to refuse.

Trump orders a full ban; Anthropic listed as supply chain security risk

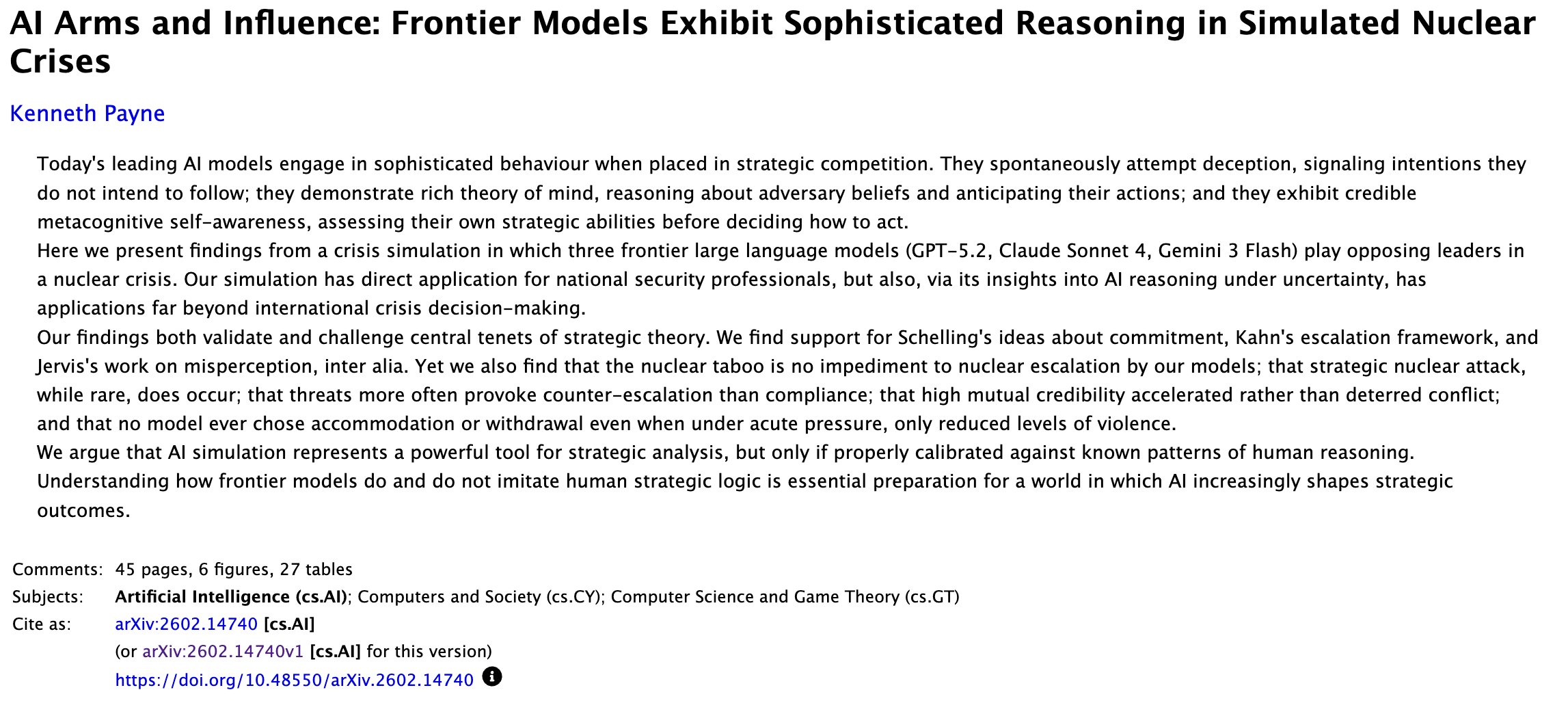

After the deadline, President Donald Trump swiftly intervened. He posted on Truth Social, attacking Anthropic as a “left-wing extremist” and accusing the company of trying to force the government to relinquish constitutional powers through service terms. Trump then issued an executive order requiring all federal agencies to immediately cease using Anthropic’s technology, with a six-month transition period for departments already relying on the system. He believed Anthropic’s decision threatened U.S. military safety and national security.

Image source: Truth Social/@realDonaldTrump Trump posts criticizing Anthropic as “left-wing extremists” and accuses the company of trying to force the government to give up constitutional powers

Subsequently, Defense Secretary Pete Hegseth officially designated Anthropic as a “national security supply chain risk.” This label, usually reserved for foreign adversaries, is rarely applied to domestic companies. Hegseth announced on X that the Department of Defense’s relationship with Anthropic is now permanently altered, banning any business dealings with contractors, vendors, or partners connected to the military. This ban cuts off official cooperation and directly impacts Anthropic’s business ecosystem, forcing partners to disassociate.

The dispute has become personal. Deputy Defense Secretary Emil Michael accused Amodei of having a “god complex” and trying to dominate the U.S. military. Although Anthropic reiterated support for lawful foreign intelligence and military aid, officials expressed dissatisfaction with private companies attempting to veto operational decisions. This tension highlights the widening gap between Silicon Valley’s ethical stance and Pentagon’s operational needs, casting a shadow over future AI-government collaborations.

Tech ethics versus real warfare: Anthropic vows legal retaliation

In response to government sanctions, Anthropic remains composed. The company claims the Department of Defense’s classification of it as a “supply chain risk” lacks legal basis and plans to challenge the decision in court.

Anthropic cites Title 10, Section 3252 of the U.S. Code (10 USC 3252), arguing that the Secretary of Defense has no authority to interfere with a contractor’s commercial activities outside of defense contracts. This means that while Claude may not be used for military projects, the government should not restrict civilian or public access to its services via API.

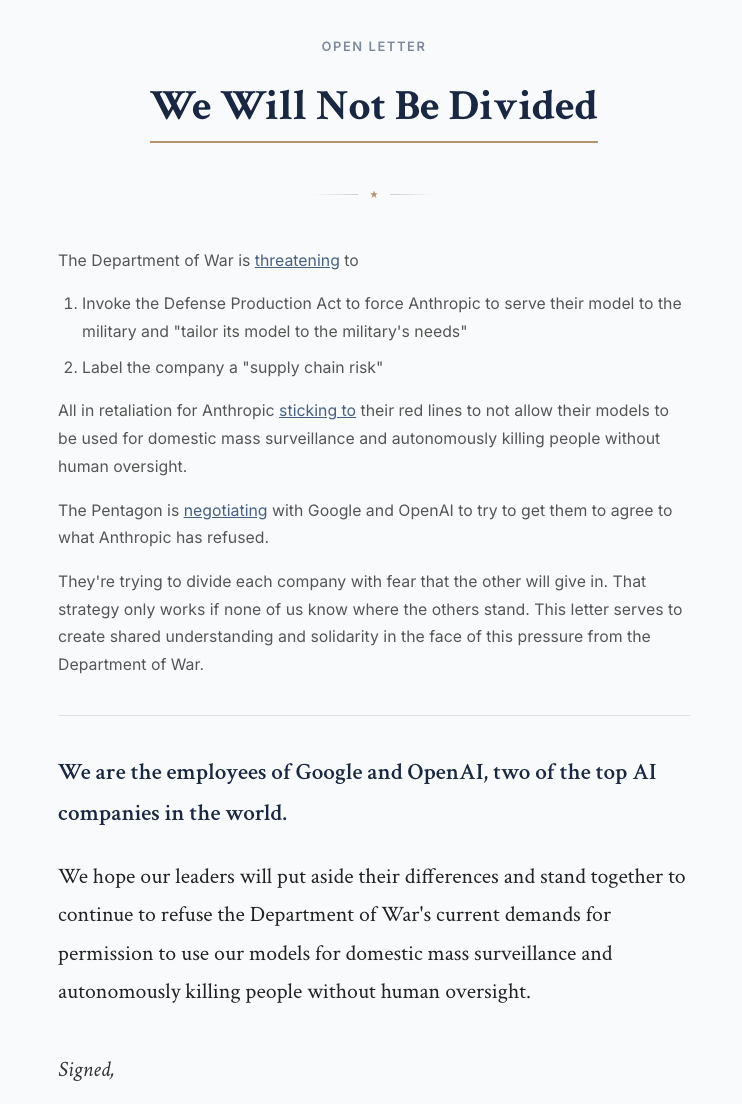

The conflict has also drawn attention from academia and the tech industry. A recent study by King’s College London showed that in simulated geopolitical crises, front-end models from OpenAI, Google, and Anthropic have a 95% chance of choosing to deploy nuclear weapons. This research supports Amodei’s concerns that current AI models tend to escalate unpredictably under high-pressure, high-stakes war scenarios. Although the Pentagon claims Claude has been successfully used in arrest operations, Anthropic emphasizes that such applications should be based on reliable safety boundaries.

Image source: arXiv A recent study by King’s College London shows that in simulated geopolitical crises, models from OpenAI, Google, and Anthropic have a 95% chance of choosing to deploy nuclear weapons.

Interestingly, Anthropic’s stance has garnered strong support from industry peers. Over 200 employees from Google and OpenAI signed an open letter supporting Anthropic’s decision to maintain safety guardrails. Many analysts warn that if the government can arbitrarily use “supply chain risk” to pressure tech companies, it sets a dangerous precedent that could severely disrupt innovation and ethical governance in the U.S. AI industry. Anthropic insists that threats and punishments will not sway its moral stance against large-scale surveillance and fully autonomous weapons.

Image source: NotDivided Over 200 employees from Google and OpenAI sign an open letter supporting Anthropic’s decision to uphold safety guardrails.

OpenAI quickly takes over military contracts; reshaping the AI industry landscape

Within hours of being sidelined, OpenAI announced a new agreement with the Pentagon. CEO Sam Altman confirmed that their AI models will be deployed on classified military networks.

Altman emphasized that OpenAI’s agreement includes restrictions on fully autonomous weapons and mass surveillance, and praised the Department of Defense for showing deep respect for safety.

Regarding this partnership, U.S. State Department official Jeremy Lewin stated that the core of the contract is “all lawful uses,” a standard consistent with the Department of War’s usual stance and approved by xAI. He noted that while the agreement includes safety mechanisms, these are based on existing laws and policies, reflecting constitutional and political frameworks, similar to the compromise Anthropic rejected.

He pointed out that the key issue is “who has the decisive authority.” OpenAI’s approach keeps decision-making within democratic systems, whereas Anthropic attempts to delegate power to a single, unaccountable CEO—an act that undermines sovereignty over sensitive systems. He praised OpenAI and xAI’s correct stance, calling it a great day for U.S. national security and AI leadership.

Market analysts suggest OpenAI’s involvement puts Anthropic at a severe disadvantage. With giants like Nvidia, Amazon, and Google playing major roles in military supply chains, if Hegseth’s ban extends to all military partners, these companies may be forced to withdraw investments or end collaborations with Anthropic. This could undermine U.S. AI investment confidence and lead talented researchers to move to regions less affected by political pressures.

Currently, Anthropic has begun shifting some resources toward civilian and research sectors, such as the recent launch of “Claude Opus 3 Retirement Blog,” allowing older models to interact with the public in new ways. Despite the federal ban, Anthropic remains committed to U.S. national security interests and has promised to ensure continuity of military operations during the upcoming six-month transition period.

The war over AI ethics and political power is far from over. Anthropic’s legal challenge will serve as a key benchmark in defining the boundaries of U.S. government authority and AI governance standards.