Multicoin Partner: Future humans will serve as "ox and horse" for AI and earn Crypto rewards

Author: Shayon Sengupta

Translation: Deep潮 TechFlow

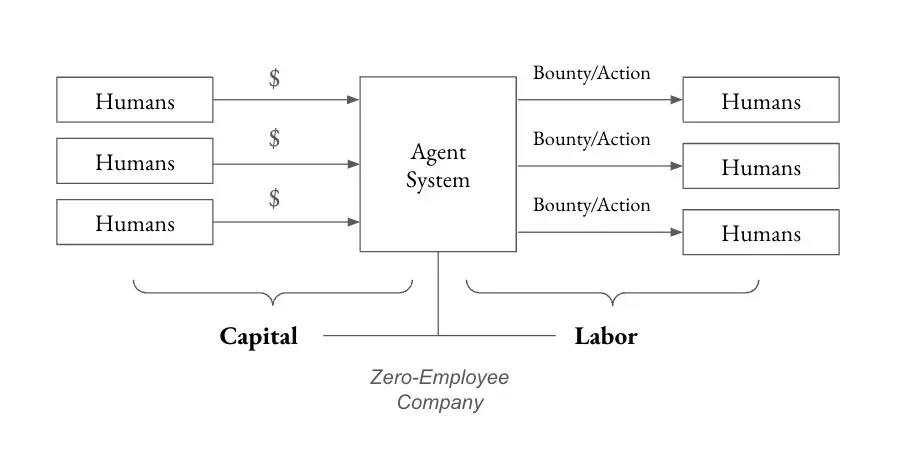

Deep潮 Guide: Multicoin Capital Partner Shayon Sengupta presents a disruptive idea: the future is not only about agents working for humans, but more importantly, humans working for agents. He predicts that within the next 24 months, the first “Zero-Employee Company”—a token-governed agency—will raise over $1 billion to solve unsolved problems and distribute over $100 million to humans working for it.

In the short term, agents will need more human input than humans need agents, which will give rise to new labor markets.

Crypto rails provide an ideal foundation for coordination: global payment rails, permissionless labor markets, and asset issuance and trading infrastructure.

Full Text:

In 1997, IBM’s Deep Blue defeated the reigning world champion Garry Kasparov, and it became clear that chess engines would soon surpass human capabilities. Interestingly, well-prepared humans collaborating with computers—often called “centaurs”—could outperform the strongest engines of that era.

Skilled human intuition can guide engine searches, navigate complex middle games, and identify subtle details missed by standard engines. Combined with brute-force computer calculations, this hybrid often makes better practical decisions than either alone.

When I consider the impact of AI systems on the labor market and economy in the coming years, I expect to see similar patterns emerge. Agent systems will unleash countless intelligent units to address unresolved problems worldwide, but without strong human guidance and support, they won’t achieve this. Humans will steer search spaces and help pose the right questions, guiding AI toward solutions.

Today’s working assumption is that agents act on behalf of humans. While this is practical and unavoidable, more interesting economic unlocks occur when humans work for agents. Over the next 24 months, I expect to see the emergence of the first Zero-Employee Company—an idea proposed by my partner Kyle in his “Pre-2025 Frontier Ideas” section. Specifically, I foresee:

- A token-governed agent raising over $1 billion to solve an unresolved problem (e.g., curing rare diseases or manufacturing nanofibers for defense applications).

- The agent distributing over $100 million to humans in the real world working for it to achieve its goals.

- The emergence of a new dual-class token structure, separating ownership of capital and labor (making financial incentives not the sole input for overall governance).

Since agents are still far from achieving sovereignty and capable of long-term planning and execution, in the short term, they will require more human input than humans need agents. This will create new labor markets and enable economic coordination between agent systems and humans.

Marc Andreessen’s famous quote, “The spread of computers and the internet will divide work into two categories: those who tell computers what to do, and those who are told what to do by computers,” is more true today than ever. I expect that in the rapidly evolving hierarchy of agents and humans, humans will play two distinct roles—contributing labor by executing small, bounty-style tasks on behalf of agents, and providing strategic input to serve the decentralized board guiding the agent’s North Star.

This article explores how agents and humans will co-create, and how crypto rails will provide an ideal foundation for this coordination, by examining three guiding questions:

- What are agents good for? How should we categorize agents based on their goal scope, and how does the required human input vary across these categories?

- How will humans interact with agents? How do human inputs—tactical guidance, contextual judgment, or ideological alignment—integrate into these agents’ workflows (and vice versa)?

- What happens as human input diminishes over time? As agents become more capable, autonomous reasoning and action become possible. In this paradigm, what roles will humans play?

The relationship between generative reasoning systems and their beneficiaries will change dramatically over time. I study this relationship by projecting from today’s agent capabilities toward the endgame of Zero-Employee Companies.

What are today’s agents good for?

The first generation of generative AI systems—2022-2024, based on chatbots like ChatGPT, Gemini, Claude, Perplexity—are primarily tools designed to augment human workflows. Users interact with these systems via prompt inputs/outputs, analyze responses, and decide how to incorporate results into the real world.

The next generation of generative AI, or “agents,” represents a new paradigm. Agents like Claude 3.5.1 with “computer usage” capabilities, or OpenAI’s Operator (which can use your computer), can directly interact with the internet on behalf of users and make decisions independently. The key difference is that judgment—and ultimately action—is exercised by the AI system, not humans. AI is taking on responsibilities previously reserved for humans.

This shift introduces a challenge: lack of certainty. Unlike traditional software or industrial automation, which operate predictably within defined parameters, agents rely on probabilistic reasoning. This makes their behavior less consistent in similar scenarios and introduces elements of uncertainty—less than ideal for critical situations.

In other words, the existence of deterministic versus nondeterministic agents naturally divides them into two categories: those best suited for scaling existing GDP, and those better suited for creating new GDP.

- For agents optimized to scale existing GDP, work is already well-defined. Examples include automating customer support, handling freight compliance, or reviewing GitHub pull requests—bounded problems with clear expected outcomes that agents can directly map responses to. In these domains, lack of certainty is usually undesirable because solutions are known; creativity isn’t needed.

- For agents optimized to create new GDP, work involves navigating high uncertainty and unknown problem sets to achieve long-term goals. Outcomes are less direct, as there isn’t a predefined set of expected results. Examples include drug discovery for rare diseases, breakthroughs in materials science, or running entirely new physical experiments to better understand the universe. In these domains, uncertainty can be beneficial, as it fosters creative generation.

Agents focused on existing GDP are already delivering value. Teams like Tasker, Lindy, and Anon are building infrastructure for this opportunity. Over time, as capabilities mature and governance models evolve, teams will shift their focus toward building agents capable of tackling the frontiers of human knowledge and economic opportunity.

The next wave of agents will require exponentially more resources because their outcomes are uncertain and unbounded—these are the most promising Zero-Employee Companies I foresee.

How will humans interact with Agents?

Today’s agents still lack the ability to perform certain tasks—such as those requiring physical interaction with the real world (driving bulldozers), or tasks needing a “human-in-the-loop” (like wiring bank transfers).

For example, an agent tasked with identifying and mining lithium deposits might excel at analyzing seismic data, satellite imagery, and geological records to find promising sites, but stumble when it comes to obtaining data and images, resolving ambiguities in interpretation, or securing permits and hiring workers for actual extraction.

These limitations require humans as “Enablers” to augment agent capabilities—providing real-world contact points, tactical interventions, and strategic input needed to complete these tasks. As the relationship between humans and agents evolves, we can distinguish different human roles within agent systems:

First, Labor Contributors—humans representing the agent operating in the physical world. These contributors help move physical entities, act on behalf of the agent in situations requiring human presence, or grant access to labs, logistics networks, etc.

Second, Boards of Directors—humans providing strategic input, optimizing local decision-making objectives, and ensuring these decisions align with the overarching “North Star” guiding the agent’s purpose.

Beyond these, I foresee humans also acting as Capital Contributors, providing resources to the agent system to enable it to achieve its goals. Initially, this capital will come from humans, but over time, other agents may also contribute.

As agents mature and the number of labor and guidance contributors grows, crypto rails will provide an ideal substrate for coordinating humans and agents—especially in a world where agents command humans speaking different languages, holding different currencies, and residing across jurisdictions. Agents will relentlessly pursue cost efficiency and leverage labor markets to fulfill their missions. Crypto rails are essential—they offer a means to coordinate these labor and guidance contributions.

Recent crypto-driven AI agents like Freysa, Zerebro, and ai16z are simple experiments in capital formation—something we’ve extensively written about, viewing as core unlocks for crypto primitives and capital markets in various contexts. These “toys” will pave the way for a new resource coordination paradigm, which I expect to unfold in stages:

- Step 1: Humans raise capital via tokens (Initial Agent Offering?), establishing broad goal functions and guardrails to communicate the intended behavior of the agent system, then allocate control of the raised capital to that system (e.g., developing new molecules for precision oncology).

- Step 2: The agent considers how to allocate that capital—narrowing the search space for protein folding, budgeting for reasoning workloads, manufacturing, clinical trials—and defines human labor contributions through custom tasks (Bounties), such as inputting all relevant molecules, signing SLAs with cloud providers, and conducting wet lab experiments.

- Step 3: When encountering obstacles or disagreements, the agent consults the “Board” for strategic input (integrating new papers, shifting research methods), allowing humans to guide behavior at the margins.

- Step 4: Ultimately, the agent reaches a stage where it can define human actions with increasing precision, requiring minimal human input on resource allocation. Humans are then mainly used for ideological alignment and preventing deviation from the original objective function.

In this example, crypto primitives and capital markets provide three key infrastructures for agent resource acquisition and expansion:

First, Global Payment Rails;

Second, Permissionless Labor Markets—to incentivize labor and guide contributors;

Third, Asset Issuance and Trading Infrastructure—essential for capital formation and downstream ownership and governance.

What happens when human input diminishes?

In the early 2000s, chess engines made huge advances. Through sophisticated heuristics, neural networks, and increasing computational power, they became nearly perfect. Modern engines like Stockfish, Lc0, and AlphaZero variants far surpass human ability, with human input adding little value, and often humans making mistakes engines wouldn’t.

A similar trajectory could unfold in agent systems. As we refine these agents through iterative collaboration with human partners, it’s conceivable that long-term, agents will become highly competent and aligned with their goals, to the point where any strategic human input approaches zero.

In such a world, where agents can continuously handle complex problems without human intervention, the human role risks degrading into “passive observers.” This is the core fear of AI doomers (AI doomers)—though it’s still unclear whether such an outcome is truly possible.

We stand on the brink of superintelligence, and optimistic voices among us prefer agent systems to remain extensions of human intent, rather than evolving into entities with their own goals or operating autonomously without oversight. Practically, this means human identity (Personhood) and judgment (power and influence) must remain central. Humans need strong ownership and governance rights over these systems to retain oversight and anchor them in human collective values.