Professor Jia Da breaks down generative AI: Is Vibe Coding really that amazing? What is the best way to write programs using AI?

In the rapid development of generative AI, many people feel confused about whether they should continue learning to code. Professor DaDa discusses the principles behind ChatGPT’s LLM in GQ magazine’s program, pointing out the limitations of Vibe Coding.

Professor DaDa breaks down generative AI and teaches you how to correctly understand Vibe Coding

Recently, GQ Taiwan shared a video on their YouTube channel, inviting UC Berkeley computer science professor Sarah Chasins to respond to many questions from netizens about programming and AI.

Amidst the rapid growth of generative AI, many are unsure whether to keep learning to code. In the video, Professor Chasins not only explains the technical principles but also offers pragmatic observations on the recent trend of “Vibe Coding.”

Professor Explains the LLM Technology Behind ChatGPT

Professor Sarah Chasins first explains in an accessible way how ChatGPT works.

ChatGPT is built on large language models (LLMs). Its core operation is quite simple: it’s a program responsible for assembling seemingly matching words together.

Developers of LLMs first collect all human-written documents and web pages online, which represent the reasonable word combinations in human cognition.

Then, the program undergoes large-scale “fill-in-the-blank” training. For example, the system might see a sentence like “The dog has four [blank],” and the human-understood answer is “legs.” If the program guesses incorrectly, developers correct it until it gets it right.

After training that takes roughly 300 to 400 years of Earth’s computational time, the program ultimately generates an extremely large “cheat sheet,” also known in the tech industry as “parameters.”

Next, providing a dialogue-formatted document allows this fill-in-the-blank expert program to transform into a chatbot, automatically completing the remaining responses to human questions based on logic.

Image source: AI-generated Nanobanana image, for reference only. Some Chinese characters may be blurry; please forgive us.

The Best Way to Learn Programming in the AI Era

Faced with the powerful capabilities of AI tools, many question the necessity of learning to code. Professor Chasins believes that the core skill in coding education is “problem decomposition,” meaning breaking down a vague big problem into smaller parts until each part can be solved with a few lines of code.

Without this training, users will find it difficult to produce truly functional complex programs using AI tools. Moreover, the training data for LLMs mostly consists of engineering-style language descriptions, not everyday language used by non-professionals, which often mismatches the training data and makes it hard for AI to generate useful code.

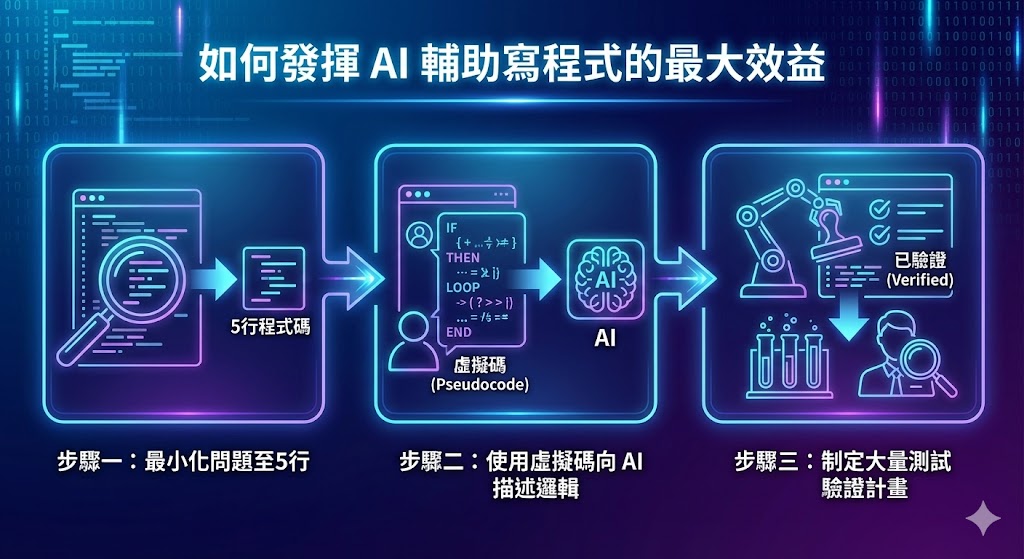

As for how to maximize the benefits of AI-assisted coding, Professor Chasins recommends following three steps:

- Minimize the problem: Break it down to about 5 lines of code.

- Use pseudo code: A way to describe logic to AI using a syntax that may combine multiple programming languages and reserved words. Although pseudo code resembles natural language, it’s not the language we use daily. Its purpose is to help the computer understand the logic more precisely.

- Develop a validation plan: Ensure the correctness of AI outputs through extensive testing or professional review.

Image source: AI-generated Nanobanana image, for reference only. Some Chinese characters may be blurry; please forgive us.

Vibe Coding Isn’t That Magical?

Regarding the recent trend of using LLMs to directly generate code instead of humans typing it, Professor Sarah Chasins remains cautious.

She analyzes that these tools perform reasonably well when handling routine content that has been written by humans countless times, but tend to fail when attempting anything innovative.

The professor also cites relevant research data indicating that, although users of LLM tools believe their efficiency has increased by 20%, their actual development speed is 20% slower than those who do not use such tools.

This shows that over-reliance on tools can create an illusion of efficiency. When faced with unseen programming requirements, lacking basic logic decomposition skills and understanding of physical principles makes it impossible to correct errors made by AI, leading to even more time-consuming final outputs.

To give a simple analogy, LLMs are like high-end autonomous vehicles that can handle common routes. But if you don’t know how to decompose the track or understand the physical principles of vehicle operation—similar to programming logic decomposition—when encountering unfamiliar, challenging curves or innovative programming needs, autonomous driving can easily go wrong. Without fundamental skills, you won’t know how to fix it.

Further reading:

AI enables one-person companies to rise! “Atmosphere Coding” disrupts tradition, allowing small teams to earn over a hundred million annually

Related Articles

Dogecoin Price Compresses Near $0.10 as Open Interest Drops

Dogecoin Drops 9.6% to $0.08885 as Adam and Eve Pattern Tests Key Neckline

Breakout Pushes $0.09656 DOGE Above Converging Trendlines as Price Trades Between Key Levels

Dogecoin (DOGE) to Bounce Back? This Key Emerging Fractal Chart Suggests So

Bitcoin Price News: BTC Downside Risk Grows While Pepeto Presale Hits $7.42M and Dogecoin and Solana Remain Shaky