OpenAI collaboration with the U.S. military sparks a resistance wave! Claude App downloads surpassing others, understanding the underlying ethical and political struggles

OpenAI collaborates with the U.S. military, sparking protests. Anthropic, which refuses military surveillance, gains public support, propelling Claude to the top of the U.S. download charts, highlighting the public’s high regard for privacy and AI ethics.

OpenAI’s Contract with U.S. Department of Defense Sparks PR Crisis

Since February 28, when OpenAI announced a confidential network deployment partnership with the U.S. Department of Defense, and after its competitor Anthropic was labeled a “supply chain risk” by the U.S. government, public debate about the boundaries between tech companies and the military has intensified. Recently, the Claude app has topped the U.S. download charts.

In response to external criticism, OpenAI CEO Sam Altman issued a statement emphasizing that the contract explicitly prohibits using AI systems for large-scale domestic surveillance of U.S. citizens, and that the company will retain control over safety mechanisms. Altman also admitted that the company was too hasty in announcing the partnership, which led to negative perceptions of opportunism and recklessness.

U.S. Community Forums Protest, Questioning OpenAI’s Decision-Making Standards

Many users have expressed disappointment with OpenAI’s current stance. On Reddit, some argue that the company has betrayed its original vision of being human-centered and emphasizing safe development.

Below Altman’s statement post, users criticized OpenAI’s lack of transparency and questioned why Anthropic, which offered the same safety terms accepted by the Department of Defense, is considered a national security threat.

Others pointed out that the statement’s vague language regarding commercial data collection and unintentional surveillance could leave legal loopholes for monitoring non-U.S. citizens or future military applications.

CNBC Exposé: OpenAI Claims No Control Over Operations

CNBC reports reveal internal meetings at OpenAI, where Altman stated on March 3 that OpenAI does not have operational decision-making authority over how the U.S. Department of Defense uses AI technology.

Reviewing parts of the meeting records, CNBC reports that Altman explained to employees that regardless of personal opinions on strikes against Iran or invasions of Venezuela, employees have no say in these matters.

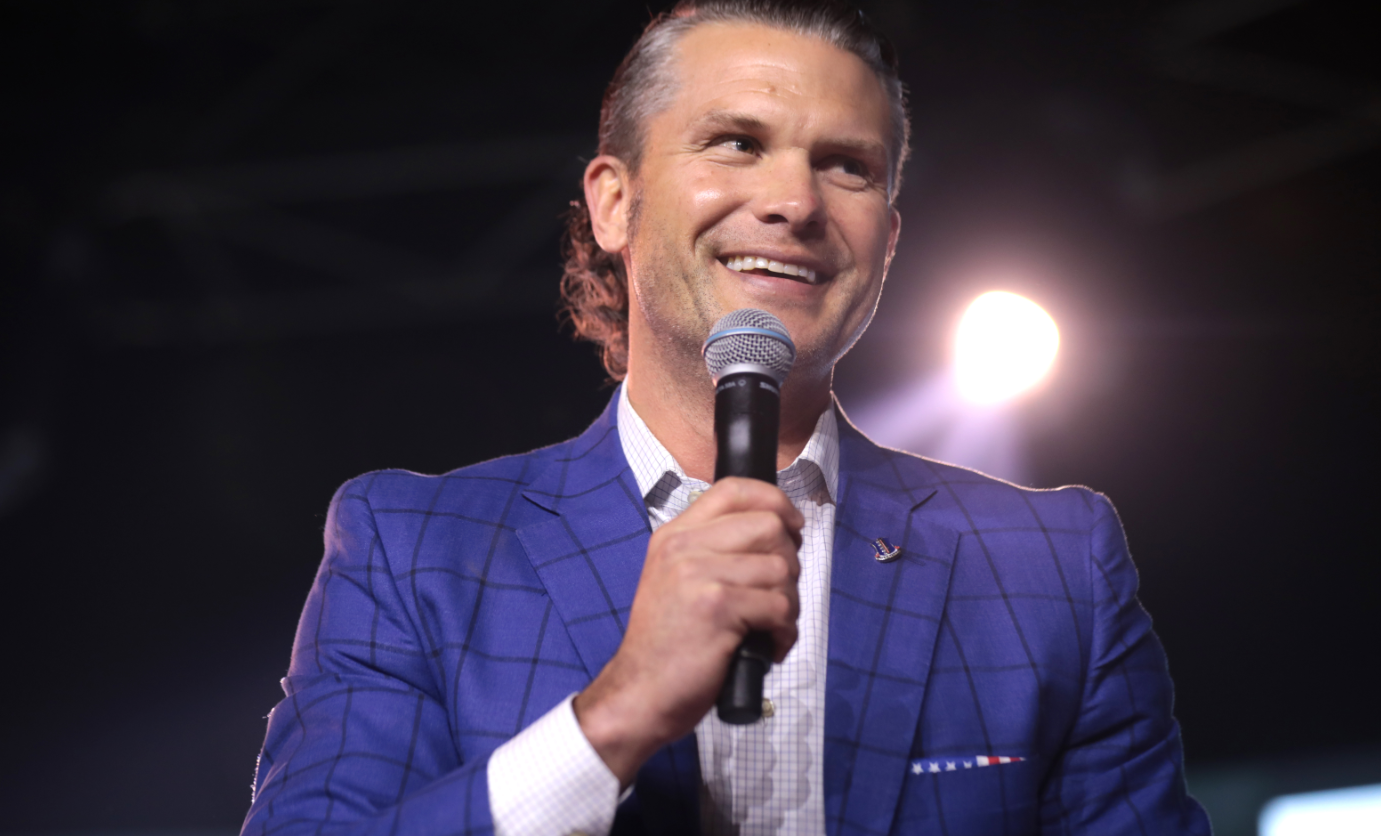

Sources say Altman emphasized that the Pentagon respects OpenAI’s technical expertise and allows the company to establish appropriate safety mechanisms, but the final operational decisions are ultimately in the hands of Defense Secretary Pete Hegseth.

Image source: commons.wikimedia, Gage Skidmore U.S. Secretary of Defense Pete Hegseth

The Tug-of-War Between Tech Ethics and National Security

The incident involving the U.S. military and Anthropic has further intensified the conflict between national security, tech ethics, and privacy rights.

Over 200 employees from Google and OpenAI have signed an open letter supporting Anthropic’s decision to maintain safety safeguards. Anthropic firmly states that threats or punishments will not change its bottom line of preventing large-scale surveillance and fully autonomous weapons.

Former FBI officer and whistleblower Edward Snowden pointed out that the NSA’s XKeyscore system can track individuals’ online activities and physical locations comprehensively, raising concerns that AI-driven surveillance could expand these capabilities.

Additionally, a recent study by King’s College London shows that in simulated geopolitical crises, there is up to a 95% chance that mainstream AI models like OpenAI and Anthropic would choose to deploy nuclear weapons.

If governments arbitrarily use supply chain risk labels to force tech companies into compliance, it could set a dangerous precedent, severely disrupting innovation and ethical governance in the U.S. AI industry.

- **Read more: National Security vs. Ethics: Anthropic Refuses to Remove Claude’s Safety Guardrails, Confronts U.S. Department of Defense

Claude App Tops Downloads — What Does It Mean?

The ongoing battle over AI ethics and political power continues. Anthropic plans to challenge government sanctions in court to defend its business rights and legal boundaries. Meanwhile, OpenAI faces a serious PR crisis and trust issues due to its rapid acquisition of military contracts.

Recent public opinion in the U.S. has also influenced the download rankings of both companies.

According to Similarweb data, as of March 1, the U.S. App Store rankings show that Claude App rose three spots among popular free iPhone apps, reaching the top of the download charts, while ChatGPT dropped one spot to second place.

This directly reflects the American public’s prioritization of privacy rights and collective resistance to government overreach amid the national security controversy.

Further Reading:

V神 Warns Again About Worldcoin: Altman’s World ID Holds 13 Million Users’ Data, Threatening Internet Freedom

Telegram Founder Reveals No Phone Use: “We’re Running Out of Time as the Free Internet Is Being Destroyed”