An AI Guide for Humanities Professionals

Humanities professionals may not drive world change, but they are the ones who bear its consequences.

At times, it seems those selling AI tutorials treat AI like magic: offer a miraculous prompt, and you can do anything. The reality, of course, is far more complex. Since founding FUNES, we've relied heavily on AI for daily production. Alongside projects like Fuyou Tiandi and my own writing, human effort alone has become insufficient. That's why we've explored extensively how AI can support our content market and humanities research.

When new colleagues joined, I created a simple Keynote. Upon hearing about it, Jia Xingjia invited me to present. My partner Keda and I titled the talk “An AI Usage Guide for Humanities Professionals.” Initially, it was a private session focused on overarching principles. Over time, we expanded and refined the presentation.

Yet, this guide was never made public until this year, when we launched Shishufeng with Chongqing and discussed it in full for the first time. The following text is adapted from the podcast “An AI Usage Guide for Humanities Professionals,” with AI assistance and some abridgment. For the complete version, listen on the official website or search “Shishufeng” on Yuzhou or Apple Podcasts.

Xiaoyuzhou QR code

Over the past year, I've shared these AI practices with many colleagues in content creation, research, and knowledge products. The goal isn't to teach a handful of magic prompts or treat AI as a cure-all. Instead, it's a methodology—a way to integrate large language models into writing, research, editing, topic selection, data organization, and production workflows, without coding, while ensuring traceability, oversight, and verification, so you remain confident signing your name to rework.

This approach stems from real-world lessons: when content production scales, human labor alone can't keep up; but direct AI generation leads to hallucinations, shortcuts, and AI-sounding text. We had to turn creativity into a production line, and the line into an iterative system.

Rather than offering a list of prompts, I want to share core guiding principles.

Before the Principles: Three Baselines for This Guide

Before diving into methods, establish three baselines. These determine both “how you use AI” and “why you use it this way.”

The process must be traceable, supervised, and verifiable

- You can't just want results and ignore the process. For humanities work, black boxes are most dangerous: hallucinations, misquotations, and conceptual drift all occur in the dark.

It must be controllable

- You need to direct how it works, by what standards, where to slow down, and where to be strict. This is production, not a game of chance.

You must still be willing to sign your name

- “Am I willing to put my name on this?” is the ultimate quality check. If not, it's rarely a moral issue—it's because your intent wasn't realized in the process, so quality can't be assured.

Principle 0: Don’t Make Wishes to AI—Treat It as a Workbench

Many treat AI as a wish-granting machine:

“Give me a good joke,” “Write a good article for me,” “Explain this paper.”

But “explain” alone has countless interpretations: for laypeople, undergraduates, graduate students, or peers. AI can't know your background, goals, preferences, or standards by default. If you don't specify, it defaults to the path of least resistance.

Treating large models as a workbench means: don't ask for finished results, but leverage AI tools for your process. Clarify the Edith, standards, and steps.

For example, asking AI to explain a paper

Transform a wishful prompt (“explain this paper”) into a workbench task:

-

Define the audience: smart, curious graduate students who aren't field experts

-

Define the explanation style: heuristic, step-by-step, academically rigorous

-

Define the structure: significance, background, research process, key technical points, then insights

-

Define the tone: respectful, not condescending, not assuming deep prior knowledge

The more your instructions resemble “assignment requirements,” the less AI acts like AI, and the more it functions as a competent assistant.

Principle 1: For AI to Succeed, Reflect on Yourself—You Are Responsible

If you hired a secretary, you wouldn’t just say:

“Fix Han Yang’s article on the American Rust Belt.”

You’d add:

Why the article exists, who it's for, where you're stuck, what problem you want solved, untouchable sections, desired style, and what matters most.

AI is no different. Treat it as a diligent, polite colleague who lacks your implicit assumptions. True “prompt engineering” is a matter of responsibility: the task remains yours; AI just helps execute.

When dissatisfied with AI output, the most effective first step isn't “AI failed,” but:

-

Did I clarify the “audience/objective/purpose”?

-

Did I provide enough background and constraints?

-

Did I break down “abstract wishes” into “actionable steps”?

-

Did I provide evaluative standards?

Principle 2: Ask at Least Three Models—Each AI Has Its Own “Personality” and Strengths

At our company, I encourage new colleagues to ask three different AIs the same question in their early use. Like people, AIs differ: some excel at writing, others at reasoning, code, or tool use. Even the same company's models or new versions adjust their “style” and “boundaries.”

A simple, effective habit: ask at least three AIs the same question, and you'll quickly get a sense for:

-

Which writes better, which reasons better, which searches better, which cuts corners

-

Which is best for first drafts, which for reviewing

-

Which is better for “topic/structure,” which for “paragraph/sentence”

The value isn't picking the “strongest model,” but managing models as a team—not as a single oracle.

Principle 3: AI Isn’t Omniscient—Treat It as a “Strong Undergraduate”

A practical expectation:

AI’s common sense ≈ a top university undergraduate.

If you think “even a strong undergrad might not know this,” assume AI doesn't either—or that it will “fake it convincingly” when it doesn't know.

This leads to two direct actions:

You must teach it anything beyond common sense

- For example: want jokes, unique copy, or highly specialized arguments? Don't just say “make it good.” Provide examples, standards, forbidden areas, and source material. If you need time to explain to a friend what makes good writing, AI can't know by default.

Treat it as an intern, not a deity

- It can fill in scaffolding and weave your materials into readable text. But the “scaffolding” and “direction” are still yours.

Principle 4: Guide AI Step by Step—White-Box, Multi-Step Is More Reliable Than Black-Box, One-Shot

AI’s strength isn’t “immediate correct answers,” but reliably completing small steps within your process. The more you demand “one-shot results,” the more likely it will cut corners.

A clear example: TTS (text-to-speech) or narration scripts. Instead of “watch out for polyphonic characters, don't misread,” break the task into steps:

-

Mark pauses/emphasis/pace changes

-

Identify potential polyphonic characters

-

Cross-check with dictionaries or authoritative sources

-

Pre-mark common, easily misread characters

-

When necessary, substitute with unambiguous homophones

Humans default to these “obviously correct” steps, but AI doesn't. If you don't specify, AI will err on the path of least resistance.

Principle 5: Industrialize Before AI-izing—You Can’t Leap from Inspiration to Automation

If your writing or research workflow is random, inspiration-driven, and disorganized, AI can't help. It can only handle what's “describable and repeatable.”

A more practical path:

-

First, turn work into a “production line”: divisible, reusable, quality-controlled

-

Then delegate sub-steps to AI: let it be a workstation, not a deity

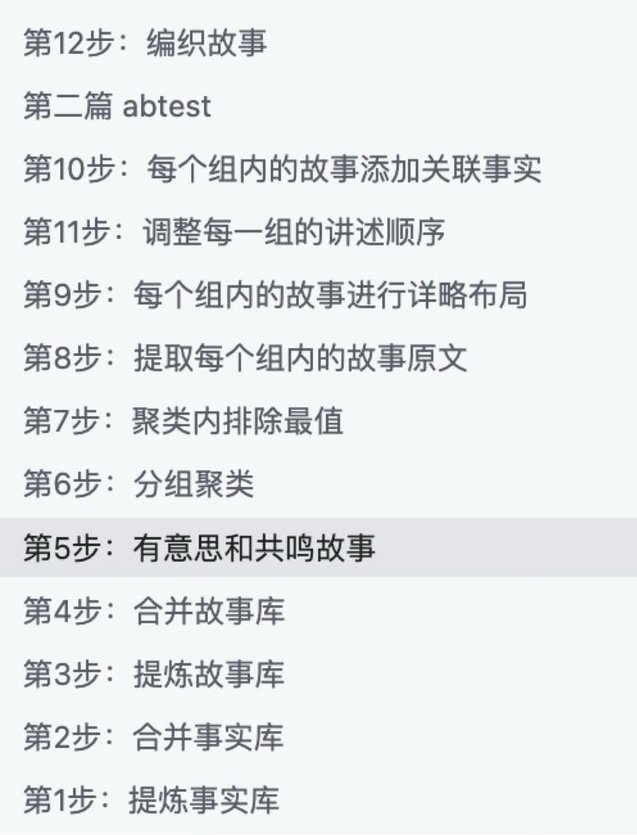

We did a crucial exercise: breaking down my nonfiction writing'Through process, including:

-

Why open with this story

-

Why choose this sentence

-

How to score examples

-

How to transition and conclude

-

How to connect small stories to the larger narrative

Eventually, we split it into dozens of steps, with different AIs handling each. The result:

The model didn't become stronger overnight, but the process linked its “incremental” capabilities.

When you can clearly describe “how my article is made,” you'll realize: the real quality ceiling isn't “which model you use,” but whether your workflow is explicit.

Some sample steps from our production line tests

I strongly recommend listening to the program for more detail.

Principle 6: Anticipate AI’s Shortcuts—It Conserves Compute, So You Eliminate “Format Barriers”

AI systematically takes shortcuts: if it can avoid opening a webpage, it will; if it can skip a PDF, it will. It's not malicious—given compute and time constraints, it defaults to the easiest path.

Your role: focus AI's resources on “understanding text,” not “processing formats.”

Effective strategies include:

-

Convert materials to plain text or Markdown before input

-

Copy web content as clean text (remove navigation, ads, footnotes)

-

For lengthy materials, first extract facts or structure, then have AI write

-

Standardize PDFs/EPUBs/webpages into a searchable TXT database

You'll notice: many people resist this “grunt work,” thinking “machines should do it.” Yet in PR-AI collaboration, the opposite holds—if you handle some mechanical tasks, AI's intelligence becomes sharper and more reliable.

Principle 7: Remember Context Is Limited—Favor “Compression” Tasks, Not “Expansion from Nothing”

AI has a context window—a memory limit. Feed it 20,000 words and it may only retain a portion; 200,000, and it might just skim headers. Imagine locking someone in a room with a 200,000-word book for a day, then asking for a recitation—that's roughly AI's “memory.”

A counterintuitive but vital insight:

Compression is easier than expansion

- Compressing 1,000,000 words to 10,000 is more reliable than expanding 10,000 to 1,000,000.

This changes your approach:

-

Don't use a 100-word prompt to request a full paper

-

Instead, feed as much material as possible (in batches, via retrieval war RAG, etc.), then have AI compress it into structure, arguments, and main text

When writing, you already “read widely → distill → organize → write.” Expect the same from AI—don't double-standard and expect creation from nothing.

Principle 8: Resist the “I'll Just Fix It” Impulse—Iterate the Process, Not the Result

Skilled writers often stumble with AI:

AI produces a 59-point draft; you think you can edit it to 80, then end up rewriting; after rewriting, you decide “I'll do it myself,” and stop using AI.

The solution isn't harder editing, but shifting focus upstream:

-

Don't aim for AI to produce a perfect 100

-

Target a production line that reliably delivers 75–80

-

Iterate the process to raise the average, not perfect each output

Principle 9: Treat the Workflow as Product Iteration—Reliability Is Value

A system that reliably delivers a 70-point draft is valuable not because it “feels like you,” but because:

-

You get a usable draft at near-zero cost

-

You can focus on higher-level decisions: topic, structure, evidence, style, and trade-offs

You don’t need an omnipotent replacement—just a reliable factory: stable, if not perfect.

Principle 10: Prioritize Quantity—Generate More, Then Select

Asking AI for a single version yields mediocrity. Use “quantity” to counteract the average.

More effective tactics:

-

Summaries: request 5 versions at once

-

Openings: request 5 at once, run AB tests

-

Topics: request 50 at once, then group and select

-

Structures: request 3 sets, then combine

-

Phrasings: request 10 different expressions, then pick the best

When you raise the average and volume, “surprise samples” scoring 85 or 90 will emerge. Often, excellence isn't a “stroke of genius,” but a result of statistical selection.

Principle 11: Don’t Overstep—Direct Like a Head Chef: Taste, Give Feedback, Send It Back

If you're the executive chef, you don't personally prep every dish. Instead, you:

-

Taste

-

Judge if it meets standards

-

Give clear feedback (what's wrong, how to fix)

-

Send the cook back to redo it

The same applies to AI collaboration. Respect its generative process—teach it your standards instead of fixing every output yourself.

Otherwise, endless “tweaking” will wear you out.

The Core Principle: Return to Reality—Materials × Taste Set the Ceiling

In the AI era, the quality of your work is increasingly:

Materials × Taste.

Models will change and methods will evolve, but these two factors remain:

Materials come from the real world

-

Given two options for writing:

-

Use the latest model, but only online materials

Or use an older model, but with full archives, oral histories, and fieldwork

- The latter often yields better work.

Taste comes from long-term practice

-

As “generation” becomes cheap, what's truly scarce is:

-

Knowing what's worth writing

-

Knowing which evidence is solid

-

Knowing which narrative is compelling

-

Willingness to do the legwork: deep dives, thorough research, hands-on investigation

AI changes the efficiency and manner of engaging with materials, but you remain the author, materials the subject, and AI just the tool.

DiggingTG deep and hands-on to collect source material

DiggingTG deep and hands-on to collect source material

Conclusion: Turn Anxiety into Expertise

Many struggle with AI not from lack of intelligence, but from being stuck in a “wish—disappointment—abandonment” cycle. The real breakthrough comes from treating AI as a workbench, engineering tasks, white-boxing processes, and developing expertise through practice.

Once you do this, you're less likely to dismiss “AI doesn't work”; you'll become a new kind of professional—able to manage new tools, neither condescending nor reverent, integrating them into your workflow, your reality, and works you proudly sign.

I'm Hanyang. If you're interested in my writing, follow me on X or read more on my blog.

Statement:

-

This article is reprinted from [HanyangWang]. Copyright belongs to the original author [HanyangWang]. For reprint concerns, please contact the Gate Learn team. The team will handle your request promptly according to established procedures.

-

Disclaimer: The views and opinions expressed in this article are solely those of the author and do not constitute investment advice.

-

Other language versions of this article are translated by the Gate Learn team. Unless Gate is credited, do not copy, distribute, or plagiarize the translated article.

Related Articles

Arweave: Capturing Market Opportunity with AO Computer

The Upcoming AO Token: Potentially the Ultimate Solution for On-Chain AI Agents

What is AIXBT by Virtuals? All You Need to Know About AIXBT

AI Agents in DeFi: Redefining Crypto as We Know It

AI+Crypto Landscape Explained: 7 Major Tracks & Over 60+ Projects